Data structure truth bombs for AI/ML in industrial ops

In my previous article I highlighted how AI and ML in industrial settings, like gas compressor stations, are only as effective as the quality of data they rely on. But quality data isn’t enough if it’s scattered or inaccessible. For AI and ML to deliver in the industrial sector, a well-defined data structure and hierarchy are essential to ensure data is organized, accessible, and usable for predictive maintenance, efficiency optimization, and operational reliability.

why data structure matters

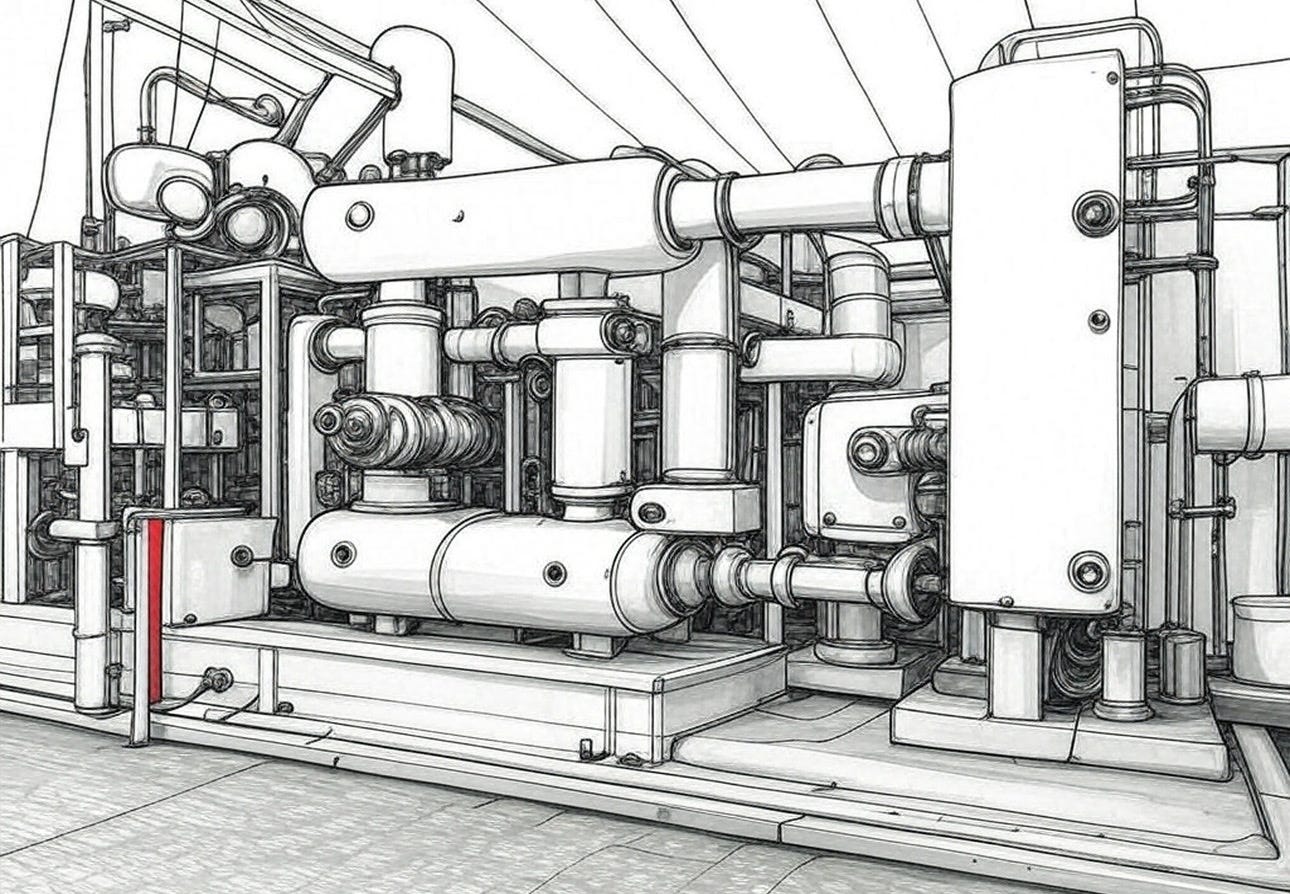

AI and ML systems thrive on structured data to identify patterns and make accurate predictions. In industrial operations, where equipment like a 3-stage reciprocating compressor generates vast amounts of data—pressure trends, valve performance metrics, and operational efficiency logs—a disorganized data pool can render these technologies useless. Without a clear structure, data from sensors, maintenance logs, and operational reports becomes a chaotic mess, leading to missed insights or erroneous outputs. For example, if pressure data from a compressor’s first stage is stored inconsistently across multiple systems, an ML model might misinterpret trends, failing to predict a valve failure and causing costly downtime.

building a data hierarchy

A robust data hierarchy organizes information logically, ensuring AI and ML can access and process it efficiently. In a gas compressor station, this means categorizing data by equipment, stage, and parameter. Start with a top-level division by asset (e.g., Compressor A, Compressor B), then break it down by stages (first, second, third), and further by metrics (suction pressure, discharge valve performance, temperature). This hierarchy allows an AI system to quickly retrieve relevant data for analysis, such as identifying a pattern of rising discharge pressure in the second stage, signaling a potential issue. A flat or unstructured system, where all data is dumped into a single repository without categorization, slows down processing and increases the risk of errors.

standard operating procedures for data management

To maintain a consistent data structure, industrial operations must establish a Standard Operating Procedure (SOP) for data management. An SOP formalizes where and how data is saved, ensuring every team member follows the same protocol. For instance, the SOP might mandate that all sensor data from a reciprocating compressor be logged in a centralized database under a specific folder structure:

Compressor_A/Stage_2/Pressure_Data/YYYY-MM-DD_HR-MIN-SEC

It should also define naming conventions, backup protocols, and access permissions to prevent data loss or unauthorized changes. Creating this SOP involves mapping out data sources (sensors, manual logs), assigning responsibilities for data entry, and setting review intervals to ensure compliance. With a clear SOP, maintenance teams can trust that AI and ML systems are working with reliable, consistently organized data, maximizing their predictive power.

the payoff of structured data

A well-structured data hierarchy, supported by a formal SOP, amplifies the benefits of AI and ML in industrial operations. It ensures that models can quickly access relevant data, leading to faster, more accurate predictions, like flagging a failing compressor valve before it disrupts production. It also reduces manual effort in data retrieval, freeing up teams to focus on action rather than data hunting. Building on the foundation of quality data discussed in my previous article, a structured approach to data management is the next step to unlocking AI and ML’s full potential in the industrial sector.

Want more strategies for AI in industrial operations? Subscribe for the latest insights.

Connect with me on my personal website

This will be a fun series of articles. Thanks!